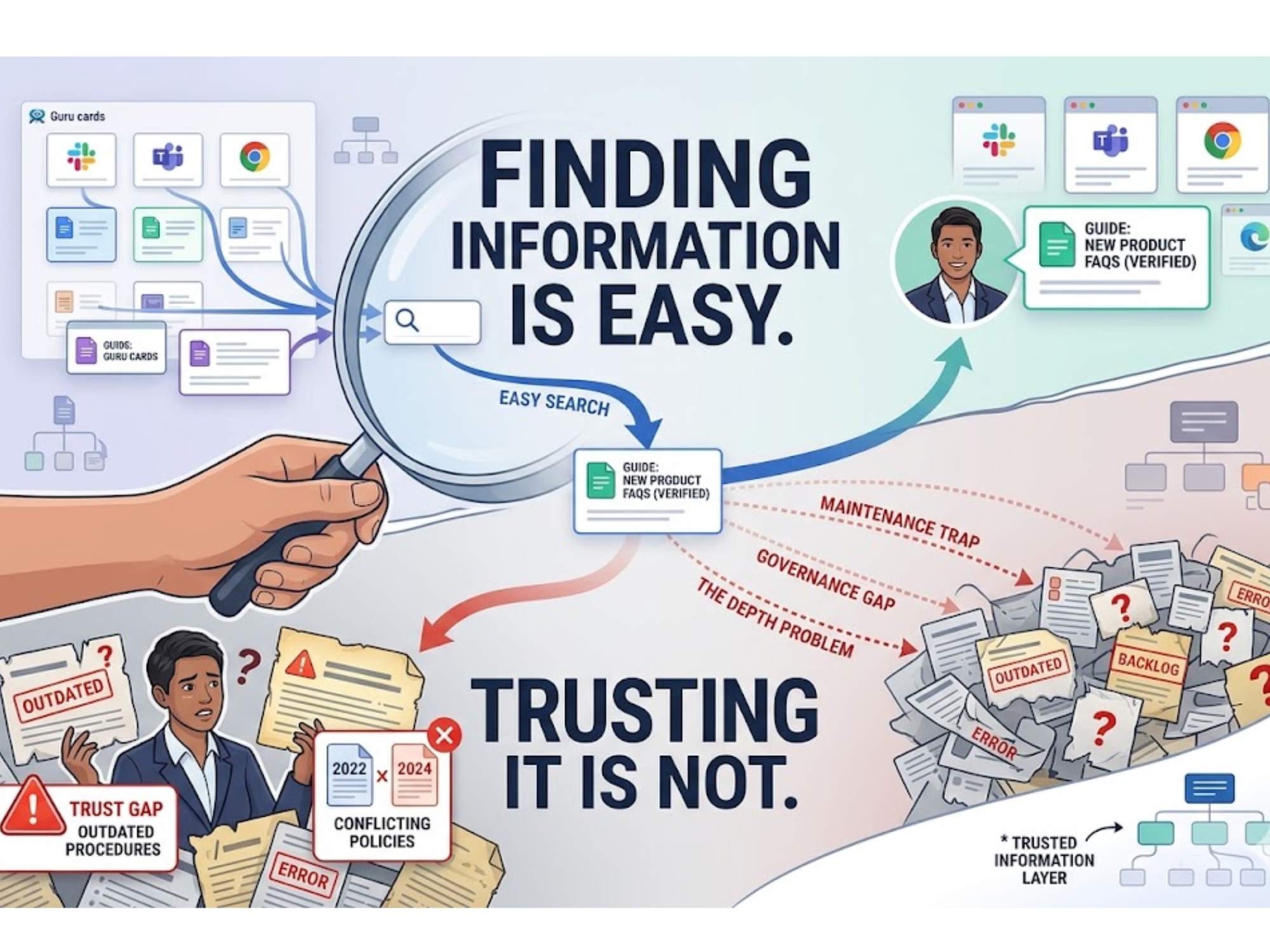

The Guru Knowledge Base Problem: Finding Information Is Easy. Trusting It Is Not

Guru's model works well when a team is small. It becomes a serious burden as organizations grow, products evolve, and procedures change.

Guru solved a real and persistent problem. Before platforms like it existed, company knowledge lived in the wrong places: buried in Slack threads, locked in the heads of senior employees, scattered across wikis that nobody maintained. Guru gave teams a structured place to capture that knowledge, verify it, and surface it where people work, right inside Slack, Teams, and the browser. For sales enablement, onboarding, and quick internal reference, it became a genuinely useful tool.

But as knowledge bases grow, a different set of problems emerges. And they tend to surface quietly, in the form of wrong answers acted on with confidence.

The Maintenance Trap: A Knowledge Base Is Only as Good as Its Last Update

Guru's model is built on cards: discrete, human-authored pieces of knowledge that need to be created, tagged, verified, and regularly updated. That works well when a team is small. It becomes a serious burden as organizations grow, products evolve, and procedures change.

Reviewers across G2 and Capterra consistently describe the same experience. Without constant gardening, a single source of truth quickly becomes a backlog of outdated information. Cards that were accurate six months ago still surface in search results, with nothing flagging that the procedure has changed or the policy has been superseded. The burden of keeping content clean falls entirely on people who have the least time for it, and it compounds over time.

The Search Problem: It Only Finds What Someone Already Wrote Down

Guru's search is keyword and semantic tag-dependent. If an answer has been documented thoroughly and tagged correctly, it works. If it lives in a past Slack thread, a meeting transcript, or simply has not been written into a card yet, Guru returns nothing.

Users report that search degrades noticeably as content volume grows, and that finding the most relevant answer among similar cards requires more effort than it should. The underlying issue is that Guru's architecture treats knowledge as something people write down in advance. For fast-moving teams or organizations with complex technical documentation, that assumption breaks quickly.

The Depth Problem: Cards Store What Things Mean. They Do Not Say What to Do.

Even when Guru surfaces the right card, it delivers a static snapshot. It cannot tell you whether a procedure is still valid under the most recent compliance revision. It cannot trace the relationship between a product configuration, the relevant runbook, and the applicable policy. It retrieves what was written. It does not reason over what it means in context.

For teams handling customer escalations, incident response, or technical troubleshooting, this gap is significant. A card that says "follow procedure X" is helpful when procedure X is current and applicable to the specific situation. When either of those is uncertain, the card becomes a starting point for more manual research rather than a reliable answer. That is not a search problem anymore. It is a reasoning problem.

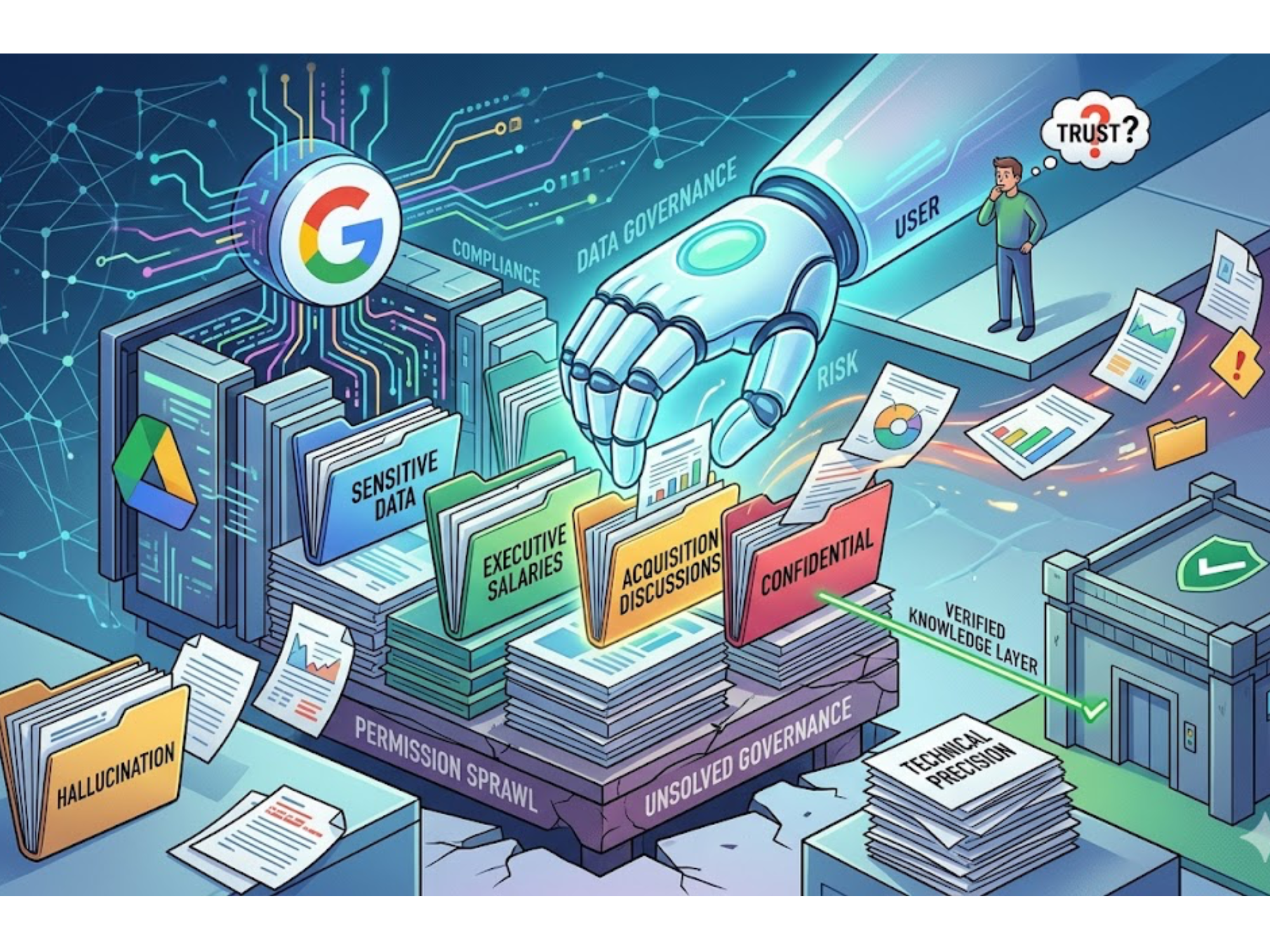

The Governance Gap: Who Owns This, and Is It Still True?

Guru offers verification reminders that prompt card owners to review content on a schedule. In practice, reminders go unanswered, ownership lapses when employees leave, and cards stay live long past their useful life. There is no automated version tracking, no audit trail showing when something changed and why, and no way to confirm that the answer you just found reflects the current state of your organization.

For teams in regulated industries or high-stakes operational environments, that ambiguity is not just an inconvenience. It is a liability.

When Knowledge Needs to Do More Than Be Found

A growing number of teams is realizing that the knowledge management problem is not really about storage or search. It is about whether the knowledge can be trusted, traced, and acted on when it matters most.

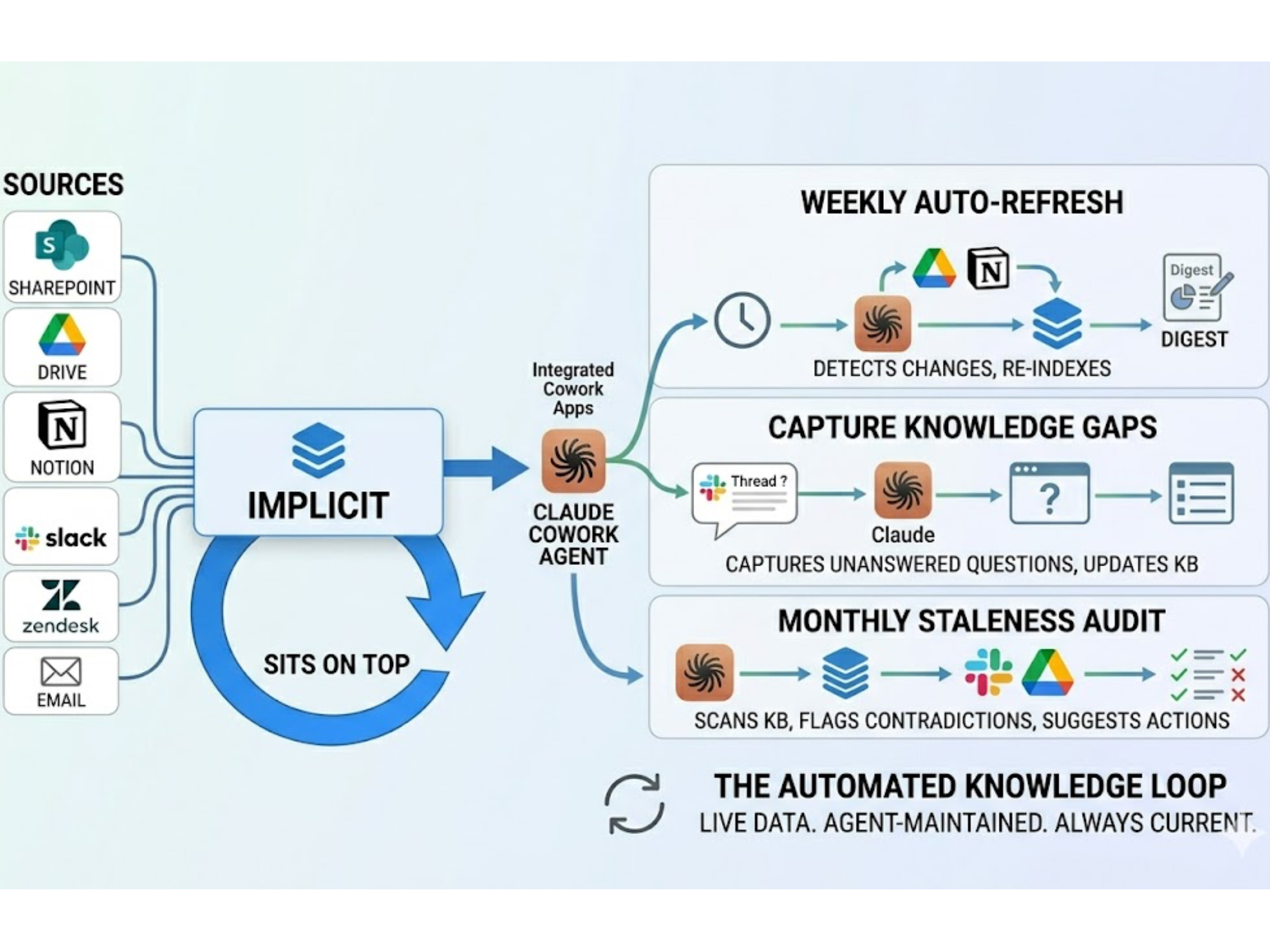

Implicit was built around exactly that premise. Rather than storing knowledge as cards to be retrieved, it builds a living knowledge layer that understands how information connects, validates answers against version lineage, and delivers cited responses that operational teams can act on with confidence.

With Implicit, relationships between documents, procedures, policies, and versions are modeled explicitly, and every answer includes a verifiable chain back to its source. You can see how that approach stacks up against Guru here.

The first wave of knowledge management was about making information findable. The next wave is about making it trustworthy enough to rely on without a second round of verification. The question is not whether tools like Guru work. They do. The question is what teams need once "finding the card" is no longer the bottleneck.

.svg)

.svg)